In a changing local search landscape, your brand isn’t just a “nice to have”—it’s your differentiator.

Algorithms are evolving. Zero-click SERPs are more common, through AI Overviews (AIO) and Search Generative Experience (SGE). And, consumers’ social awareness has shifted. Having a recognizable and trustworthy brand can make or break your local visibility in 2025.

In our recent Live Masterclass: How Important is Brand for Local Search Visibility in 2025?, expert panellist Elizabeth Rule unpacked how brand strength influences local SEO performance.

Here are the top takeaways from Elizabeth’s session to help you align your branding efforts with your local SEO goals—and get found by more customers in 2025.

Watch the Replay

Brand Is As Important Now As It Always Has Been

Why is everyone talking about brand right now?

The hype of AIOs and SGE, alongside the increase in zero-click search has brought brand right into focus. A good example of this is how Forbes tends to rank across multiple AIO searches, and continues to show up after various algorithm updates. It feels like Google is favoring bigger brands with more domain authority over smaller brands.

Alongside this, Google is launching a new brand profile through the merchant center (not all local businesses will be able to use this), which is a clear indicator that Google is shifting toward focusing on what a brand can bring to a topic or industry in search results.

Remember: Brand is just as important as it always has been. Google has always cared about brands and will continue to do so in the future.

Tip 1: A Strong Brand Is More than Just Your Logo

Having a strong brand means people know and trust your business. They’re more likely to click on your listing or your website than a brand they don’t know.

Trust and awareness in your brand can come from the local community, your review profile and through zero-click search.

Tip: Even if someone searches for you and doesn’t click on your website, they need to be able to contact you from the search results. Having a completed Google Business Profile that aligns your brand in the local pack with the organic results will help with this.

Tip 2: Tap into Communities

Offline communities, online communities, and social media are all great ways to get your brand out to your target audience.

Brands that use more traditional marketing, such as billboards and branded vehicles, do a little better in SEO because more people are aware of and engaged with the brand in general. This engagement helps you rank better, and the more people click your website, the higher up in the SERPs you’ll show.

Spread your marketing efforts beyond Google and your website. Local social media groups or community forums, like Facebook groups, Next Door, or local SubReddits, are great ways to get your brand out there.

Tip: It’s useful to engage in online communities. Whether you’re answering questions or helping people, you can use these forums to build trust with the community. If someone has read your helpful answer online, they’re more likely to click your brand in search results.

Tip 3: Your Brand Website Is Critical

Getting your website up to date is crucial, as it’s a valuable source of truth for Google. Mention the important information about your business—who you are, what you do, the services you offer, and where you do it. Make it easy for Google and your customers to understand all of this information.

While this information helps Google build its organic results and customers move further down the funnel, it could also help your brand if and when it appears on AIOs. Though there doesn’t seem to be a known indication of Google’s ranking factors for AIO, or how they pull the information that appears from it, it is known that Google sometimes pulls through incorrect information.

Remember: Make sure your NAP is correct on both your website and Google Business Profile. This is important for both Google and your customers. A lack of consistency with business information can cause confusion and distrust.

Tip 4: Become an Authority in Your Sector

Showcase your authority, knowledge, and understanding of your website through your brand entity. For example, your website is a great place to put your well-crafted content and answer the questions that your customers and potential customers have.

Tell your audience how to do things and show that you know how to do it best. (An example here would be a decorator—explain to your audience how they can decorate themselves, but also show your authority and expertise in case they’d prefer for you to do it for them.) Become the go-to brand for knowledge and education.

Remember: Google uses Experience, Expertise, Authority, and Trust (EEAT) in its ranking algorithms. Showcasing your knowledge and expertise is a great way to demonstrate your authority, a win for both Google and your end-user.

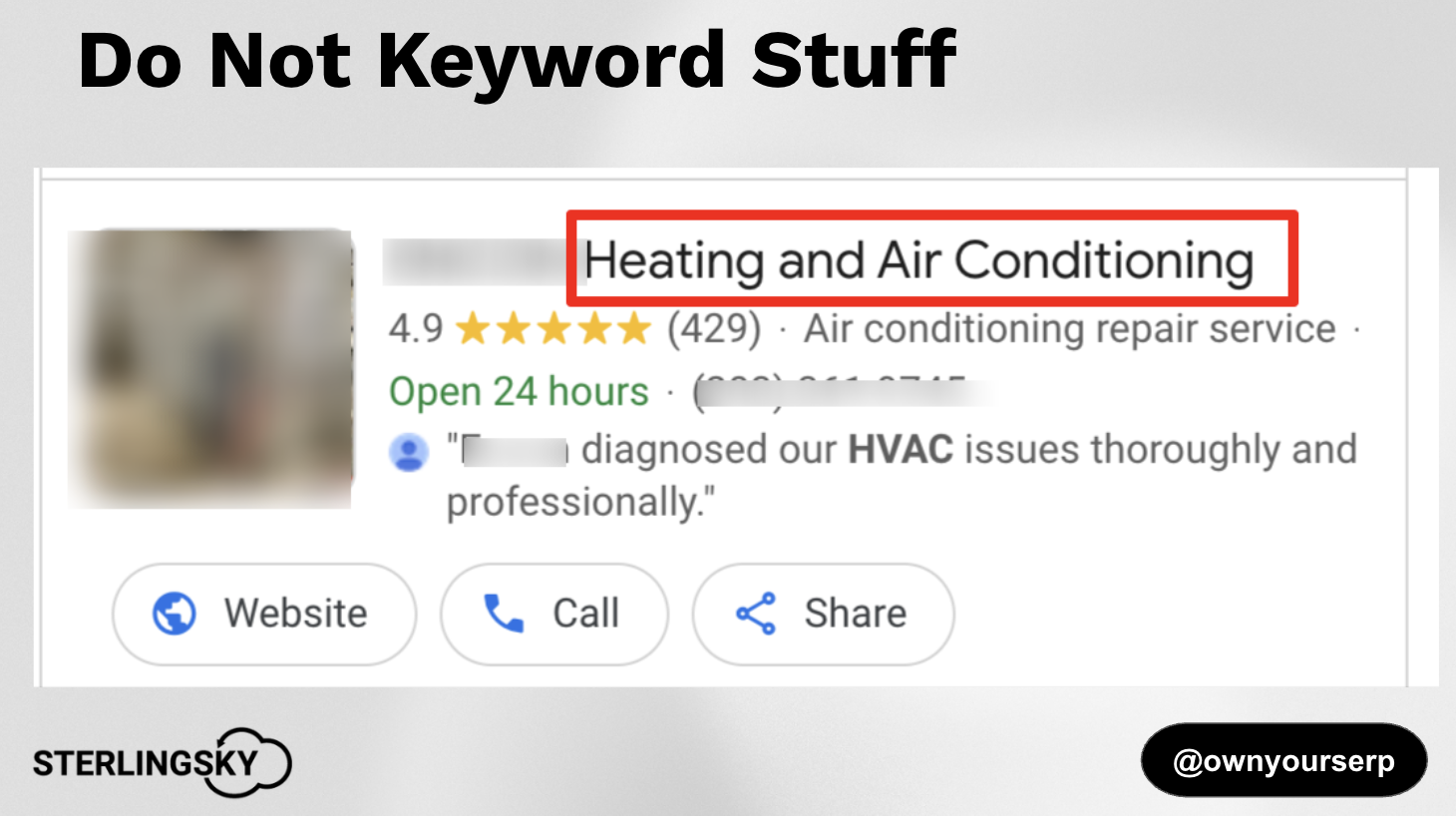

Tip 5: Should You Use Keywords in Business Names?

Your business name is a huge part of your brand, and you want to make sure your business profile appears at the top of search results. With that in mind, adding one or two top-converting keywords to your business name means you can have a keyword-rich Google Business Profile.

An example of this would be to add a unique brand modifier to your name. This could be ‘Tarquin Heating and Air Conditioning’ as opposed to ‘Toronto Heating and Air Conditioning’.

You must go through the official steps to make this change, and you must make sure you follow the guidelines. Do not stuff your business name. I repeat, no keyword stuffing your GBP name!

This is a type of Google Business Profile spam!

An oldie, but a goodie… do not do this!

Tip 6: Online Reputation Is Crucial for Brand

Your brand reputation shows potential customers how well you do business, and reflects your brand as a whole. That’s why reviews are critical for brand, and something you really shouldn’t ignore.

Getting new, regular Google reviews is a ranking factor, and according to the 2025 Local Consumer Review Survey, 27% of consumers would use a business if they can see new reviews from the past month.

So, while you don’t have full control over your Google reviews, you can control how you manage your reputation. Whether that’s responding kindly to a negative review, responding with gratitude for positive reviews, or asking your customers to leave a review for you, reputation can help build trust and conversions.

Some business owners respond to negative reviews with sass or humour, but this doesn’t give people a good feeling about their brand or make them want to have an experience with you. An empathetic and kind review response may make people consider using you, as it reflects your brand and the experience someone might get if they buy from you.

All in all, brand-building isn’t a quick SEO fix. It’s a strategic, long-term investment that pays off in trust, engagement, and higher-quality traffic. Having a strong brand will impact the way potential customers perceive you, remember you, and engage with you.

If you need help building your brand, get in touch with our local SEO services team to discover how we can support your goals.