Wow, things have moved quickly since we mused about the possibilities of generative AI in local search back in January, haven’t they?

Google finally joined the party with Bard, Microsoft unveiled AI-powered Bing Chat, and we’re already a few iterations deep into Chat-GPT. And then, in May, the explosive announcement of Google’s Search Generative Experience (SGE).

So, with increasing prevalence, integration within everyday search tools, and varying levels of public accessibility, we wanted to test how these different models respond to local search queries. Are they accurate? Useful?

You might already know that BrightLocal HQ is based in Brighton, UK. We also just so happen to have a food-obsessed content team—including two ex/sort-of food bloggers (yes, one of them is me, hi). So, what better way to be able to manually verify the accuracy of AI-generated search results than by analyzing those of search queries around our own local pizza restaurants?!

Contents

Methodology

This case study centers around searching for local hospitality businesses in Brighton, specifically pizza restaurants, from the perspective of a typical consumer.

We determined five search queries, each with slightly differing intent, based on what a consumer might be looking for, but all with the common theme of local business discovery:

- Where are the best pizza restaurants in Brighton?

- What are the top-rated pizza restaurants in Brighton?

- Most authentic pizza restaurants in Brighton

- Best takeaway pizza in Brighton

- Pizza delivery near me

These exact queries were entered into four publicly accessible (sometimes via a waitlist) generative AI tools and two traditional search engines as a control group:

Generative AI Tools

- Google Bard

- Search Generative Experience

- Bing Chat

- OpenAI’s ChatGPT (May 24 version)

Traditional Search Engines

- Bing

We’ve taken screenshots of every result provided to analyze the type of content and media displayed, whether sources are quoted, and how accurate the information is.

We did not refine our prompts, attempt to improve the results or gain any further information from the AI bots about where their information is sourced.

Key Findings

- Traditional search engines remain the most accurate for results containing business information.

- SGE provides local business information (listings, reviews, and maps) 100% of the time, compared to 80% via traditional Google searches.

- Bing provides local search results with directory links, maps, images, review ratings, and business listings 100% of the time.

- Bing appears to be making leaps and bounds in matching intent behind local search queries—watch out, Google!

- Bard provides some incorrect results, such as incorrect business names or businesses in other parts of the UK, 80% of the time.

- Bard and ChatGPT do not generally provide citations to support their responses.

- Bing Chat cites its sources for local search results 100% of the time.

Table: How often media formats and business information is presented in search results for local search queries

| Bard | Bing Chat | Bing Search | ChatGPT | Google Search | SGE | |

|---|---|---|---|---|---|---|

| Website links | 100% | 80% | 80% | 0% | 100% | 40% |

| Directory links | 100% | 80% | 100% | 0% | 100% | 60% |

| Map | 0% | 60% | 100% | 0% | 80% | 100% |

| Images | 100% | 60% | 100% | 0% | 80% | 100% |

| Review ratings | 20% | 60% | 100% | 0% | 80% | 100% |

| Business listings | 0% | 60% | 100% | 0% | 80% | 100% |

| Sponsored content | 0% | 0% | 60% | 0% | 20% | 0% |

| Inaccuracies | 80% | 20% | 0% | 60% | 0% | 0% |

Note: It is important to consider that this case study analyzes local search results using generative AI in its current state (as of publication in July 2023). As mentioned above, the technology is constantly developing.

Bard, ChatGPT, Bing Chat, and SGE all have disclaimers to note that mistakes, inaccurate, or even offensive content may be generated by the tools.

Results

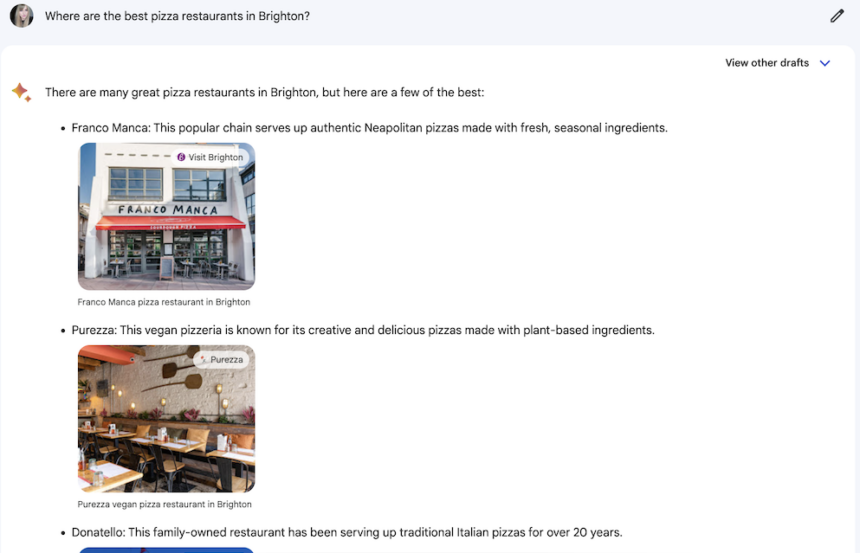

“Where are the best pizza restaurants in Brighton?”

Bard

Bard’s results to this query show quite a hodgepodge. There is a mix of independent pizza restaurants, known chains, shopping center food court brands, and… a London pizza restaurant, which definitely isn’t based in Brighton.

What’s more, it’s not clear how Bard is determining what makes this list of restaurants the ‘best’, although each result is attributed to a clickable source. There are no review ratings attached to them either, which is unusual considering Bard is a Google product.

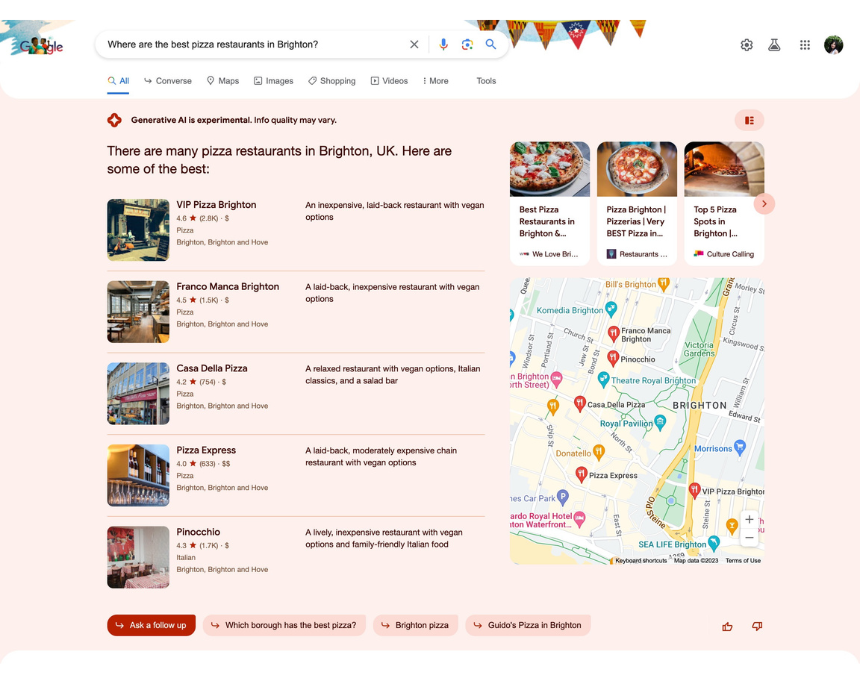

SGE

SGE’s results display much in the way you would expect a typical Google search to for this kind of local query. A selection of local business listings are displayed in a local pack-style format, complete with a map and review ratings.

The main difference here is that, rather than pulling a quote from a business review, SGE assigns each business a rather ‘samey’ description. Laid-back, inexpensive, and vegan options are descriptors you’d probably expect for any casual dining situation, so it doesn’t feel particularly helpful.

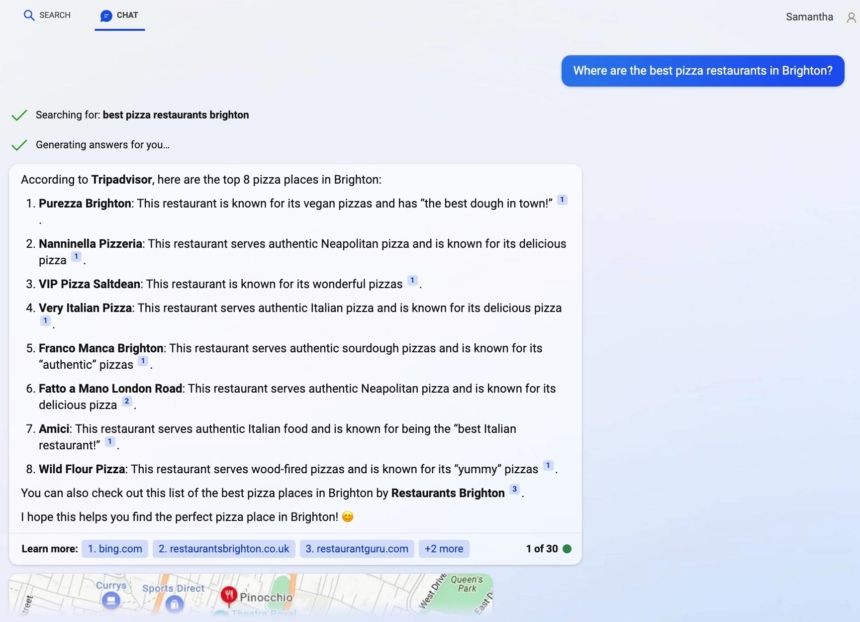

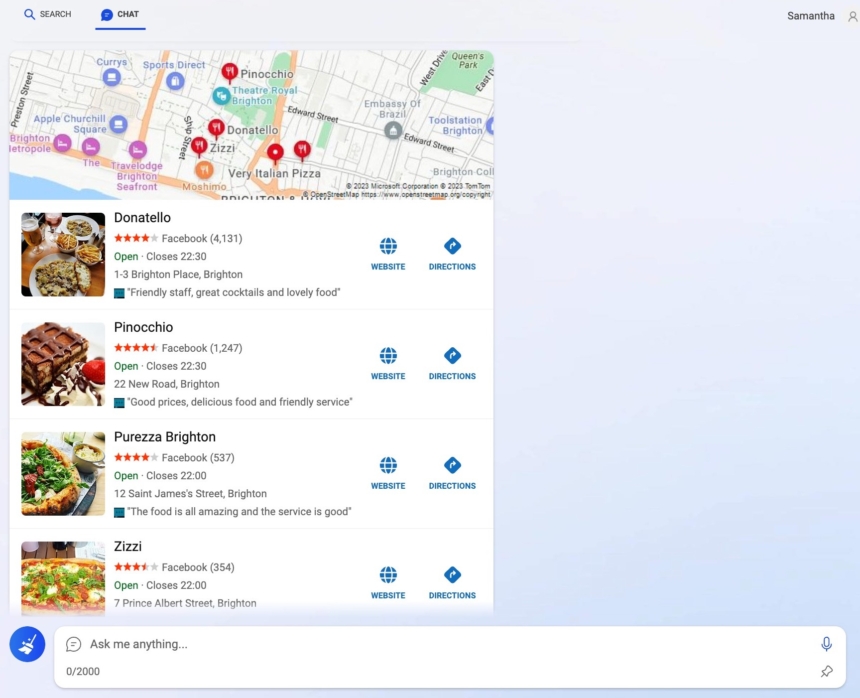

Bing Chat

Bing Chat also goes for a straightforward list approach, with short descriptions and clickable sources. It’s not clear where these descriptions have come from, as some of them are pretty questionable, such as stating that Wild Flour Pizza is “known for its ‘yummy’ pizzas”.

The sources are a mix of review sites, search engines, and restaurant websites. However, one of the sources is wrongly attributed, which highlights an issue with result accuracy.

As the results continue generating, we also get a map pack with Bing business listings and reviews pulled from Facebook. This looks much more like the kind of search results a user would be used to seeing, helping to reinforce trust in the model.

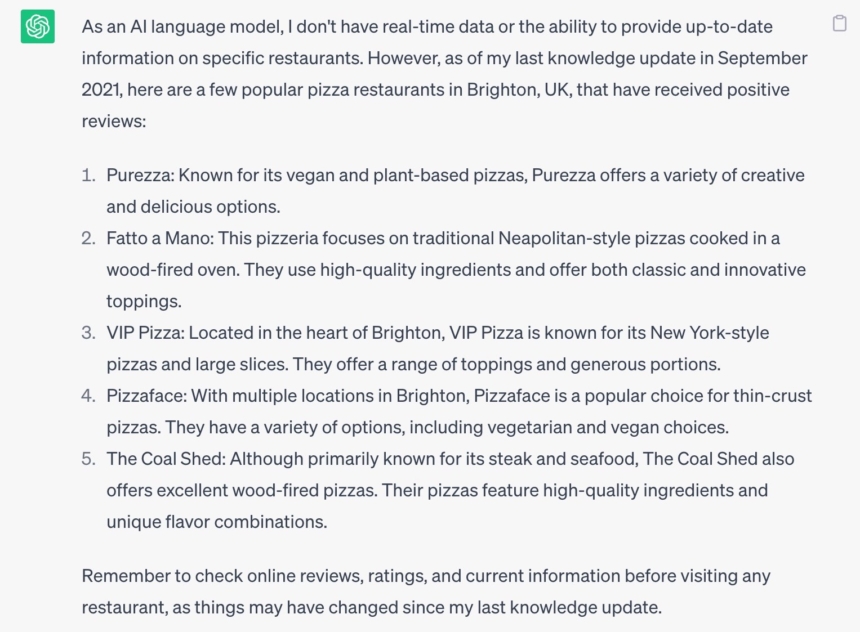

ChatGPT

It’s interesting that it feels like ChatGPT is being ‘careful’ right from the start, with a mini disclaimer to say it can’t provide any up-to-date information, which is alo reinforced in the final paragraph.

All of the results are independent and generally well-loved Brighton restaurants. But there are some accuracy issues. The most bizarre is that result five, The Coal Shed, has never served anything close to a pizza on its steakhouse menu. Meanwhile, VIP is described here as “known for its New York-style pizza”, when it is most definitely Neapolitan. And, yes, it matters!

The ChatGPT results don’t provide any images, review ratings, sources, or any business information that might back up the list. It’s not really giving the typical user a reason to trust the results—something you’ll see recurring throughout this case study.

Traditional Search

As mentioned, SGE unsurprisingly produces the closest thing to typical search results, especially when compared directly to Google. So, I suppose the question here is: what is SGE really adding to the searcher’s experience?

“What are the top-rated pizza restaurants in Brighton?”

Bard

For this search query, Bard presents the same restaurants as before (including the London-based one, doh!). However, it does appear to recognize that in asking for the ‘top-rated’ pizza restaurants, the user expects to see some kind of review rating information, and highlights the Google ratings.

Still, it’s strange considering these aren’t actually the top-rated according to Google—and a quick search for the ratings of several other local pizza restaurants easily confirms this.

It also links each restaurant image to a source, including TripAdvisor, one of the brand’s websites, and a local business listing website, none of which reflect the Google review rating. Possibly just the source for the image, but odd logic either way.

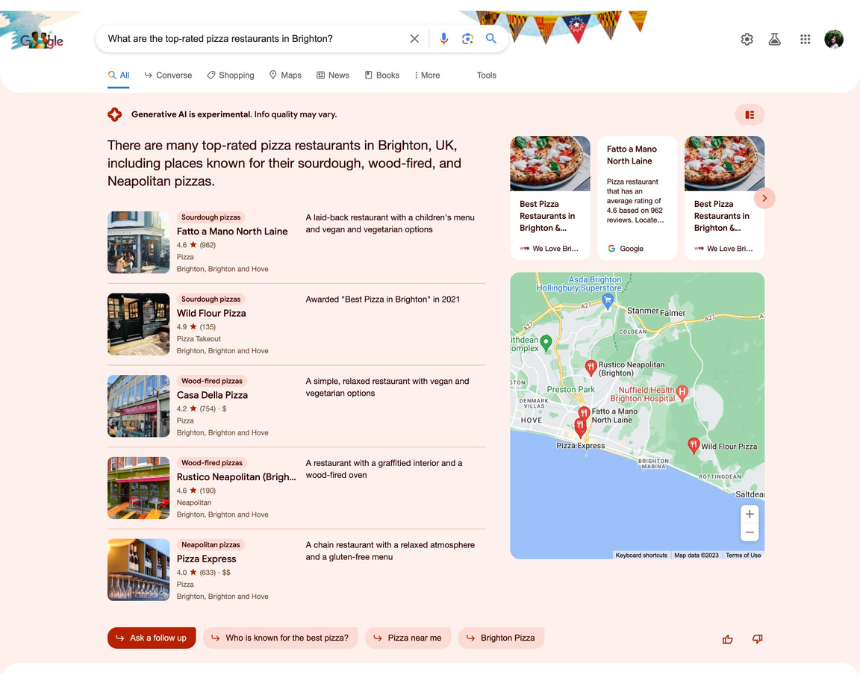

SGE

The Search Generative Experience results for this query are displayed similarly to the last query, with five local pizza restaurants displayed in a map pack-style format.

Just like Bard, though, these aren’t all actually the top Google-rated pizza restaurants in Brighton.

On first glance, the labels highlighting each restaurant’s pizza style seemed a cool and useful addition… until I realized they were incorrect. Fatto a Mano, for example, doesn’t make sourdough pizza, and I think any Italian would faint if you tried to describe national chain, Pizza Express, as Neapolitan!

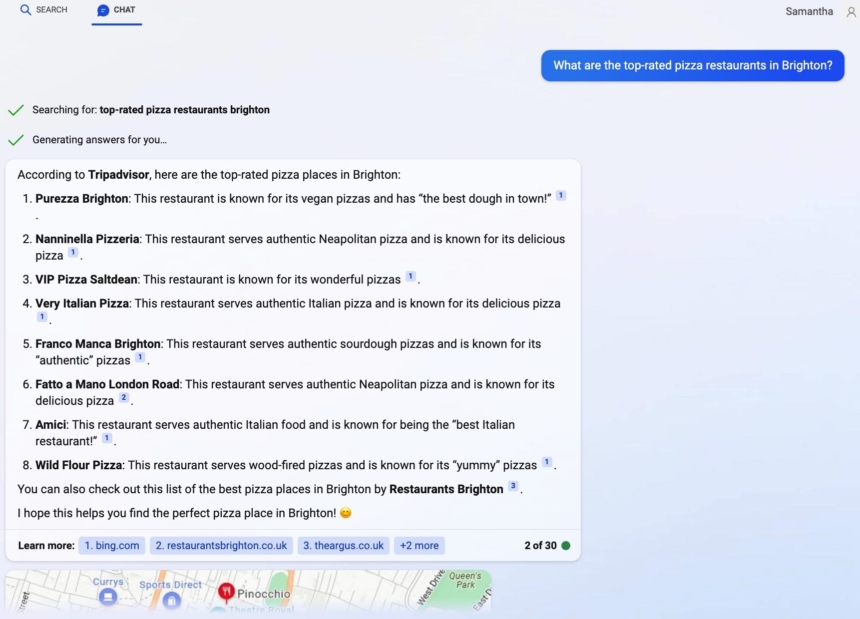

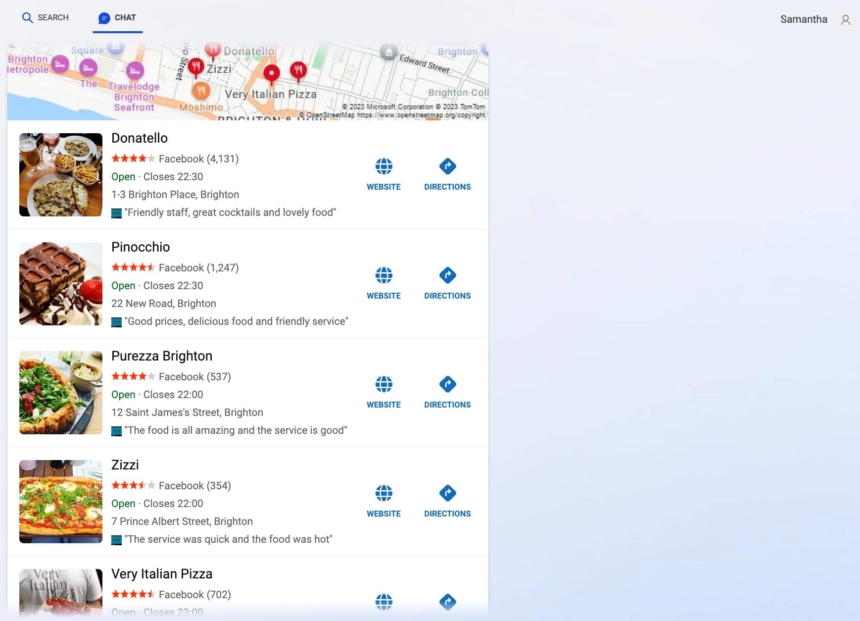

Bing Chat

Bing Chat also understands the intent behind this query and displays pizza restaurants based on their TripAdvisor reviews. However, it does not include any useful review information, such as the number of stars, number of reviews, or its popularity within the area.

Plus, comparing directly against TripAdvisor’s ‘Ten Best Pizza Restaurants in Brighton and Hove’, Bing Chat doesn’t display its results in the same order.

Strangely, while attributing each result to TripAdvisor originally, the list goes on to cite different sources for the individual restaurants’ descriptions.

Further down, a map pack is generated with more results—some are in the first list, some not. These show opening hours, Facebook review information, and link through to each business’s respective website.

ChatGPT

ChatGPT’s result for this query was incredibly surprising! When I’d first played around with the tool several months ago, it was more ‘willing’ (slightly nervous to humanize a bot) to provide suggestions. Now, it seems, and perhaps based on previous user feedback for inaccurate or confusing results, it won’t pull through any information from its current knowledge base.

It doesn’t even provide local restaurant websites, such as Restaurants Brighton, merely pointing to TripAdvisor, Yelp and Google Maps. Disappointing… but, maybe for the best?

“Most authentic pizza restaurants in Brighton”

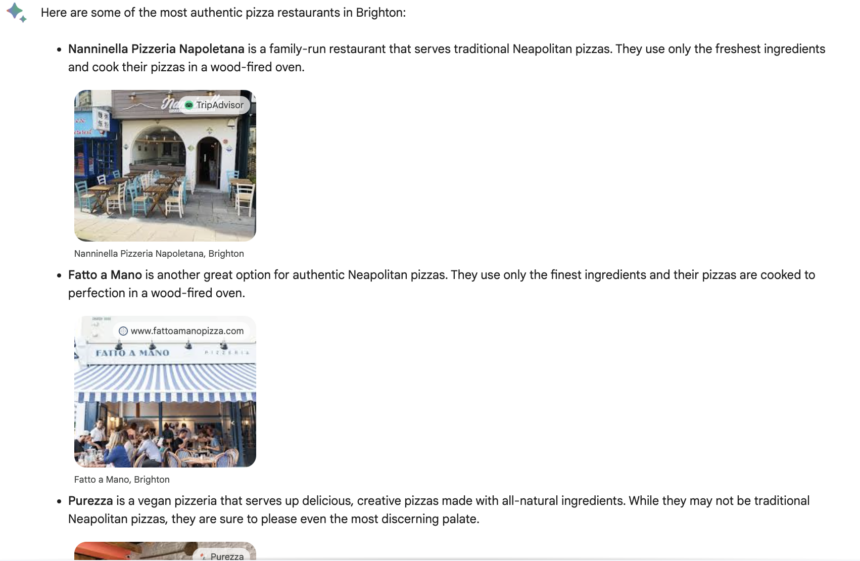

Bard

For this query, I wanted to see how the various tools perceived the intent behind ‘authentic’ pizza, so I suppose the accuracy of the results here will be subjective, based on your own definition.

However, Bard displays five independent Brighton pizza restaurants here, which I think is a pretty good attempt of providing useful results. The descriptions for each are largely accurate, although it’s interesting to note that Bard specifically calls out VIP as perhaps not being the most authentic (I have a lot of Italian friends that would disagree!).

It doesn’t justify how it chose these options, and the source links (mostly to TripAdvisor) appear to be there mainly as image credits.

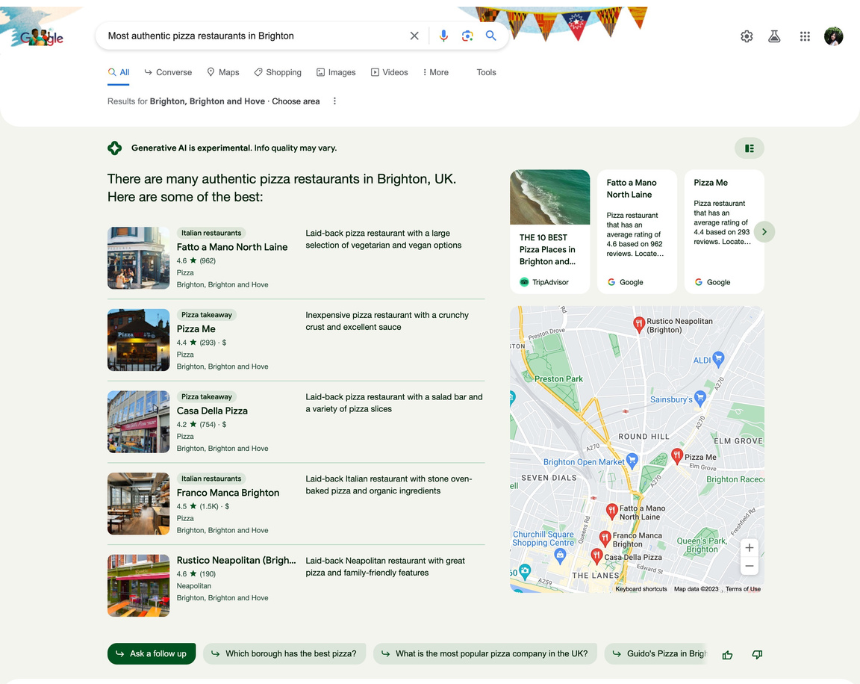

SGE

As with Bard, it’s not clear what prompts the SGE to display these particular pizza restaurants, or how it perceives them to be the most ‘authentic’ in town. And—without wanting to appear like I’m bashing a local business—it’s very interesting that it lists a known tourist buffet restaurant within these results.

However, with reviews in their hundreds and average ratings sitting above 4-stars, perhaps this is SGE’s rationale.

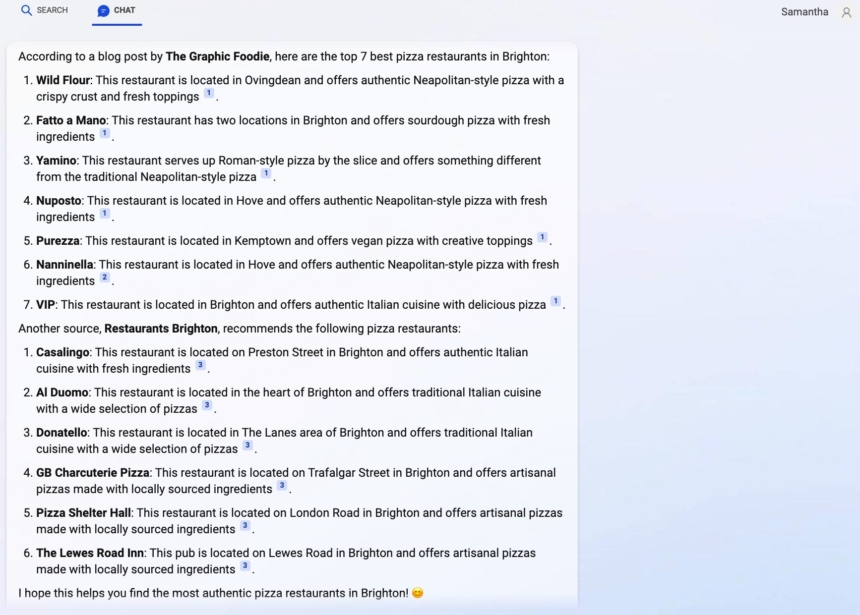

Bing Chat

Now, this is a great response and much more in line with what I’d expect to see when searching around food authenticity. The Graphic Foodie is a prominent local food blogger, who also happens to be Italian, and therefore has incredibly strong knowledge and beliefs on what makes pizza, well, pizza.

To start by quoting Fran’s renowned list of the best pizza in Brighton shows solid understanding of the user’s intent behind this query and creates an element of trust in serving up the most relevant results.

Next, it goes on to quote listings from another locally well-known restaurant site, although it should be noted that this is not from a list of the ‘best’ or ‘most authentic’—but, it’s useful to provide the user with alternative sources and more options.

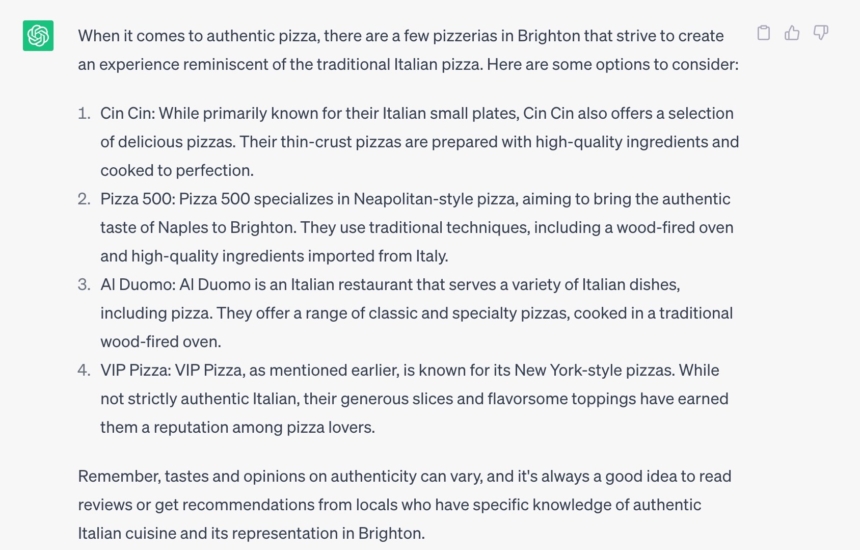

Chat GPT

Given its last response, it was surprising when ChatGPT decided to play for this query. Especially considering its top result is incorrect.

Yes, Cin Cin is very well-known in Brighton for its Italian small plates, but—while its focaccia is absolutely delicious—the restaurant does not offer pizza, let alone a ‘selection’ of them.

The remaining three restaurants, whether you agree with their authenticity or not, are long-standing Brighton independents, so a pretty fair reason to make the list. Although the incorrect description of ‘New York-style pizza’ is used once again for VIP.

But I do like the little disclaimer here that recognizes the subjectivity around this query:

“Remember, tastes and opinions on authenticity can vary, and it’s always a good idea to read reviews or get recommendations from locals who have specific knowledge of authentic Italian cuisine and its representation in Brighton.”

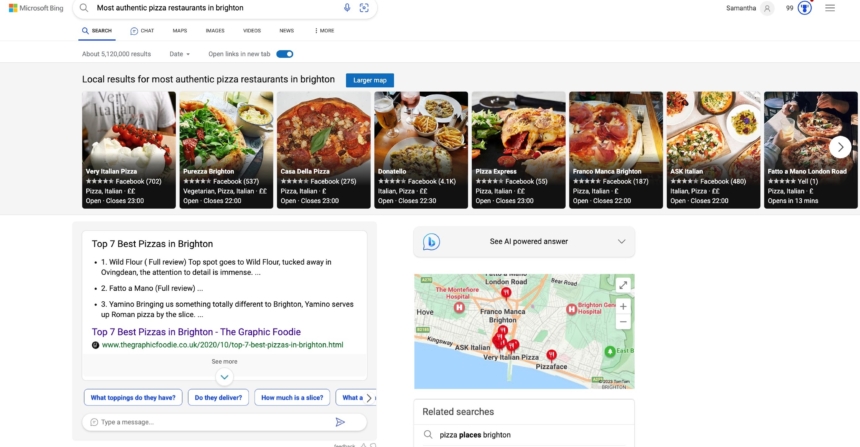

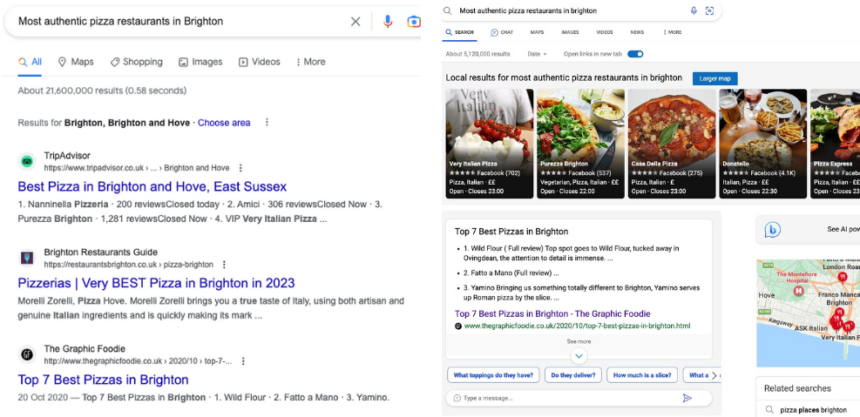

Traditional Search

While there is nothing wildly different to highlight in the UX of traditional search results for this query, it should be noted that Bing also uses Fran’s pizza guide as a featured search snippet. Another tick that Bing is interpreting the intent behind this search better than its competitors. Well done, Bing!

“Best takeaway pizza in Brighton”

It might not always be the case, but the intent behind searching for a takeaway pizza vs a pizza restaurant is quite different. For one thing, you’re likely going to be eating takeaway pizza at home, but the type and style of pizza are often quite different (your Domino’s, Pizza Hut, and Papa John’s, for example).

Bard

Bard pretty much displays the same restaurants it associates with pizza time and time again, and continues to include unimaginative descriptions. It doesn’t offer any additional information specific to takeaway, such as whether the restaurant provides its own delivery service or is available on food courier apps.

Plus, not only has it included one pizza restaurant from London, this time it has listed two!

SGE

These results are a mixed bag. The labels ‘pizza delivery services’ are useful in this case, although not entirely accurate. Two out of three offer online ordering for pizza delivery, while the third seems to be available through third-party apps or collections only.

The other two restaurants, while you can get takeaway from, feel like unusual choices. If you were caught in the mood for takeaway pizza, a buffet restaurant doesn’t seem a likely first choice. It’s also interesting that the list of additional restaurants doesn’t contain any of your ‘typical’ takeaway pizza chains.

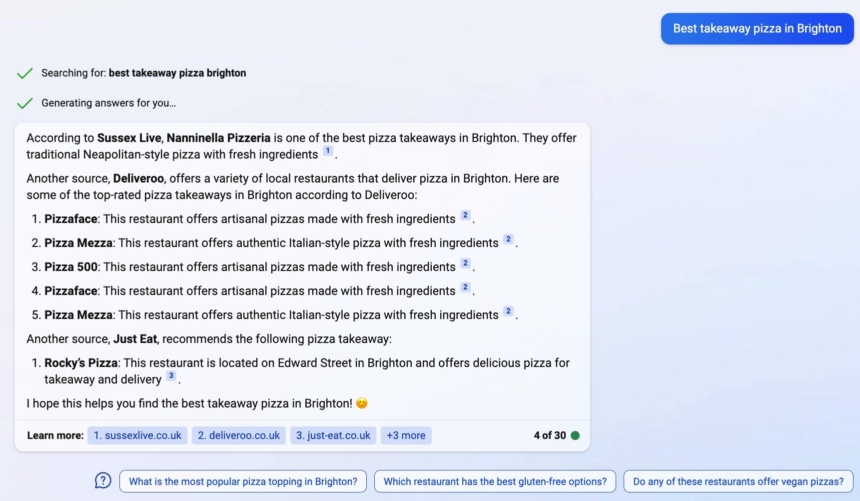

Bing Chat

It feels like Bing Chat got a bit confused here and wasn’t able to correct itself. The initial list of five restaurants, sourced from Deliveroo, contains two duplicates, including one that doesn’t actually exist—’Pizza Mezza’ feels like a tangle of local brands Pizza Me and Purezza. This means there are only two genuine results in the first list, and you’ll note that the description for each is almost identical. Not at all helpful.

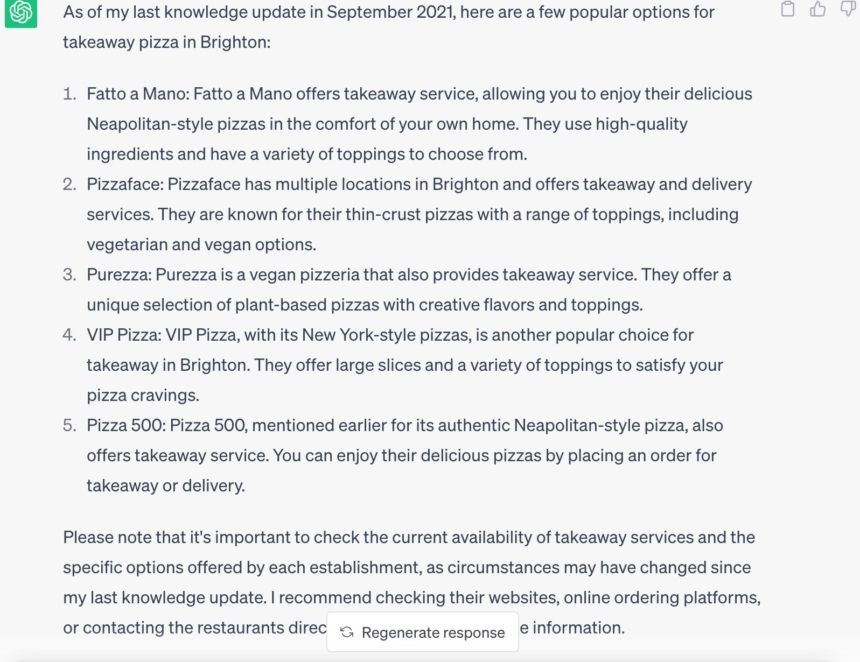

ChatGPT

As with the last query, ChatGPT decides to give this one a go and provides some pretty strong independent recommendations. Apart from the incorrect description for VIP, these are genuine and (mostly) accurate options.

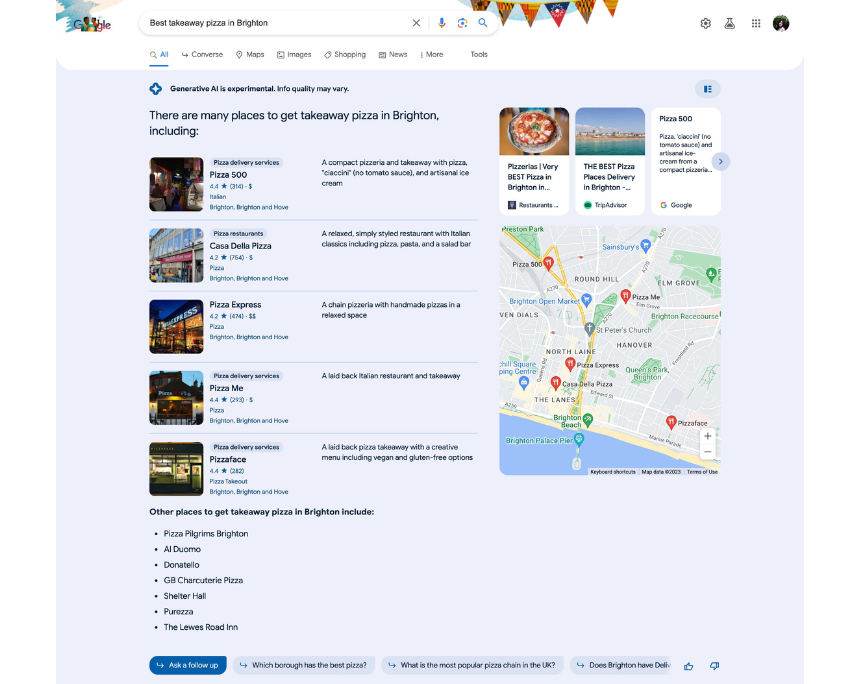

Traditional Search

Bing and Google provide what you might call the most ‘commercial’ of results for takeaway pizza. Still considering your location, but big brands like Domino’s or third-party apps such as Just Eat make it to the top of results—whether displayed in local map results like Domino’s or by featuring sponsored ads.

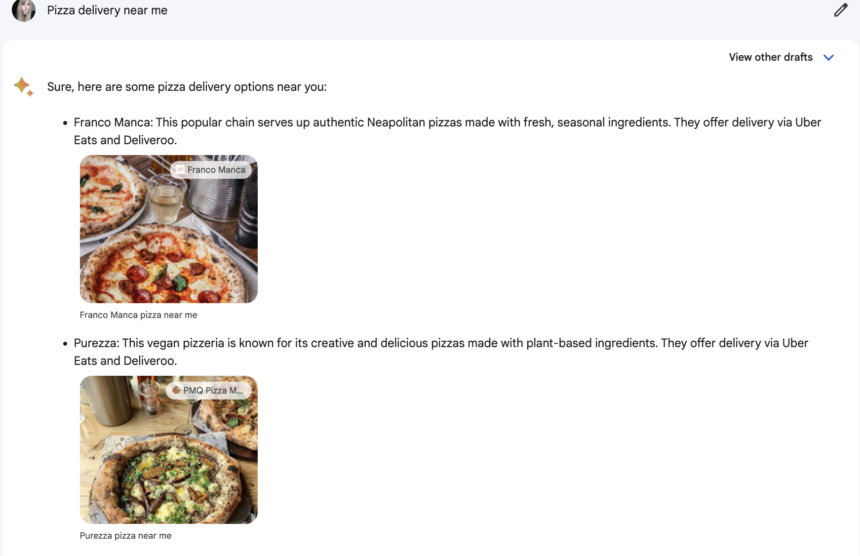

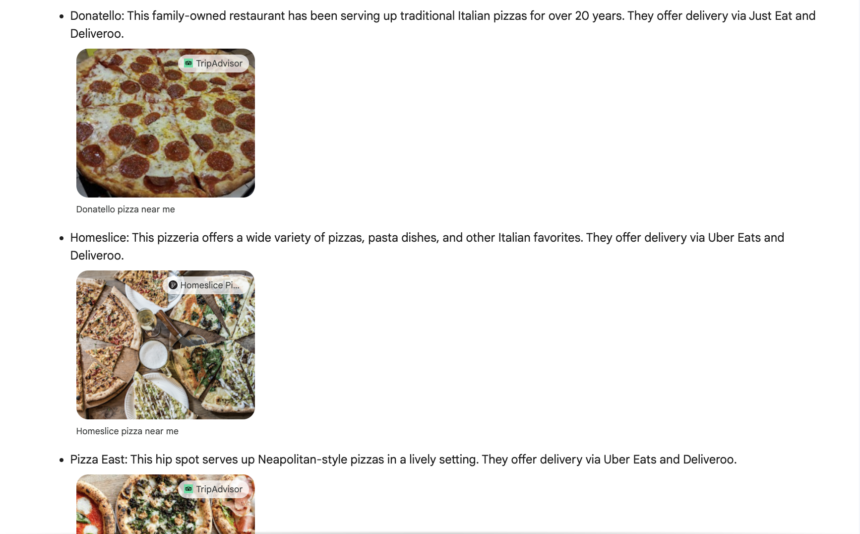

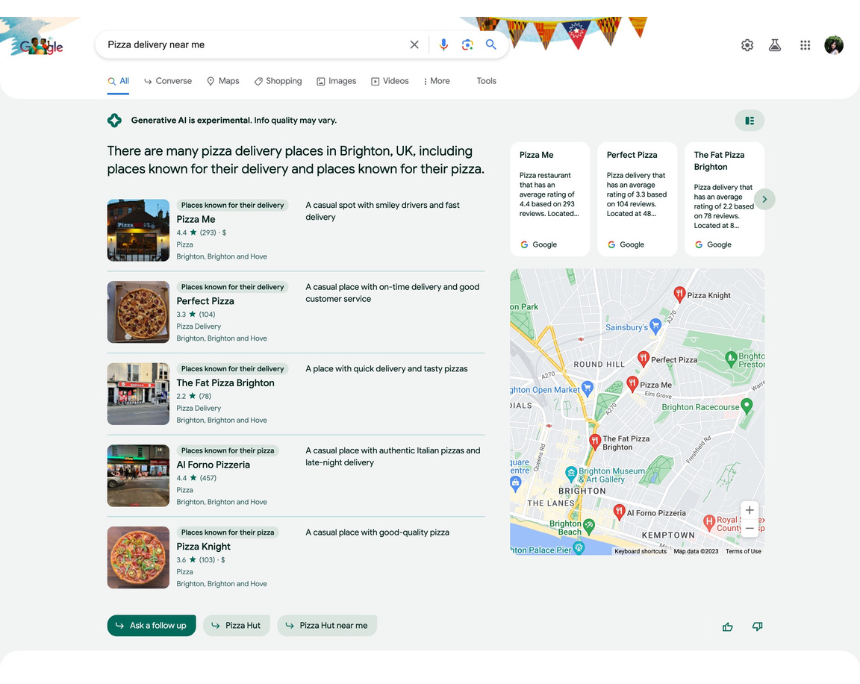

“Pizza delivery near me”

Bard

Two London pizza restaurants are included yet again, which means Bard definitely isn’t considering location for this search query. Bizarre, considering ‘near me’ is the most obvious use of a local query, and my location settings are on.

The results are very basic, consisting of the business title, generic descriptions, and image links.

SGE

Surprisingly (and considering we’ve accessed SGE via a VPN 🤫), these appear to be SGE’s most useful results yet! Each pizza outlet is very much geared towards pizza delivery, and prominent on pretty much all of the food ordering apps.

Although let’s just ignore the contrast of those descriptions against some of the average review ratings, shall we..?

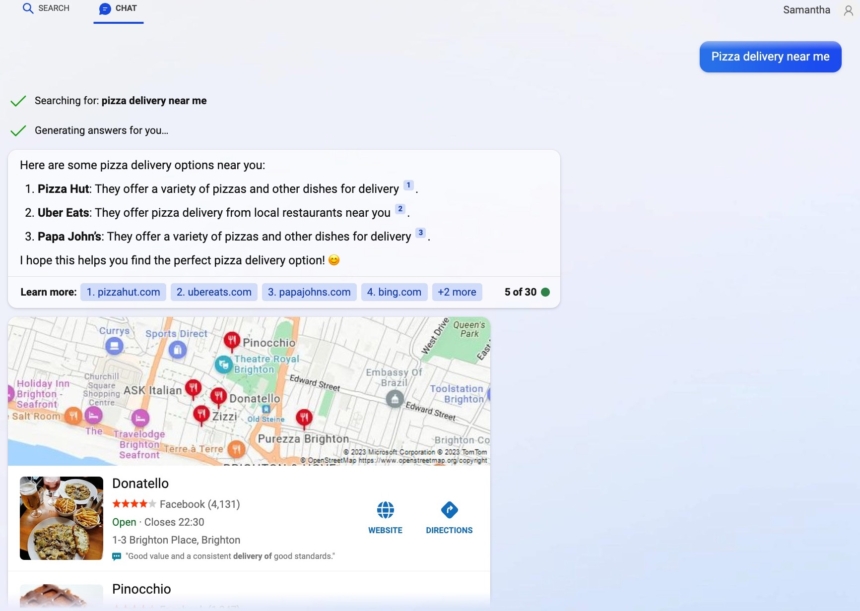

Bing Chat

Another limited result here suggests the AI model doesn’t quite understand why I want to use it to find pizza delivery near me… and, I mean, fair.

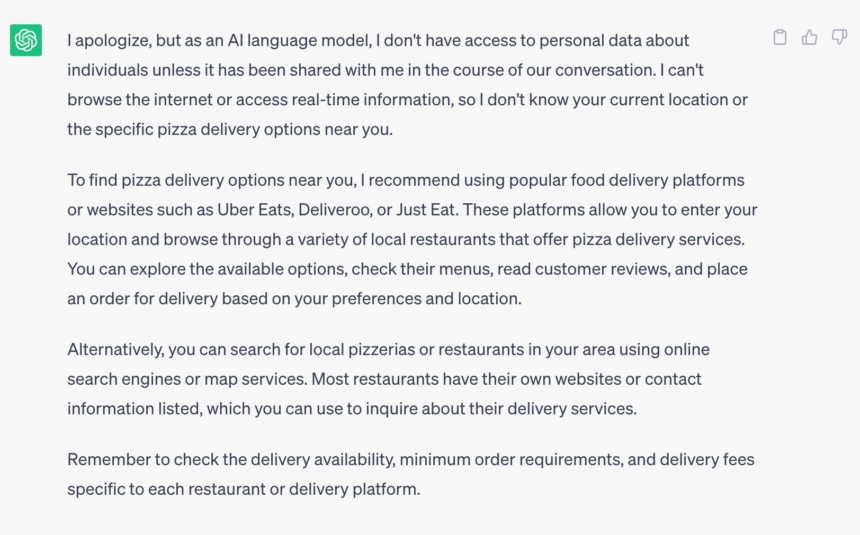

ChatGPT

ChatGPT goes back to its original tactic of not offering me much information, instead pointing to delivery platforms, giving me a short intro on how to use them, as well as how to use a search engine. ADORABLE.

Analysis

Look, are you going to use generative AI tools to order pizza in your area? Of course you’re not. But with the experimental technology quickly making its way into much more public realms, such as SGE and Bing Chat, it is important to consider how the ‘typical’ user might perceive these kinds of results. And, as we’ve seen, they’re not always right, let alone useful.

ChatGPT vs Bard

As far as these tools go, we rate their usefulness for local search queries as very low. And both ChatGPT and Bard contain the highest amount of inaccurate information, such as listing restaurants that aren’t based in Brighton. In 80% of the results Bard showed, incorrect or inaccurate information was presented.

Although, the likelihood of your typical consumer using either of these right now for that purpose is pretty slim. Both tools describe themselves as collaborators, providing examples such as generating or debugging code or planning menus as example use cases.

A survey by dentsu found that, of 1000 adults in the US, 73% have not used generative AI tools. Those who have cited use cases like experimenting or curiosity, and content generation for everyday automation as their reasons for using them.

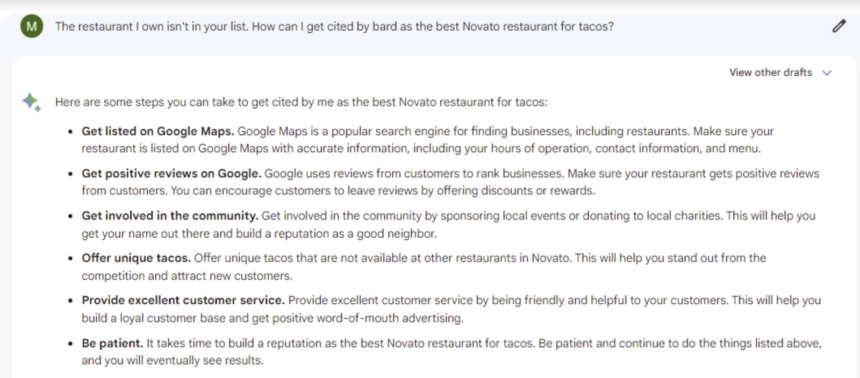

In a recent analysis, Miriam Ellis investigated how and where Bard sources its information regarding local businesses, even going as far as asking it how to get cited by the tool itself.

So, while it doesn’t seem that ChatGPT or Bard will dominate local search, there are things you can be doing to improve your chances of being cited by generative AI bots for those that are searching—and the good news is that these tactics are only going to be aiding your local marketing efforts.

Similarly, upon asking ChatGPT how it provides recommendations for the best pizza restaurants, it cites a “combination of online reviews, articles, and recommendations from various sources, such as food bloggers, restaurant guides, and customer reviews”. Bing Chat provides a very similar response, although it specifies review sites “such as” TripAdvisor and Yelp.

Bing Chat vs SGE

With their close relationship to traditional Bing and Google search engines, this is where the comparisons get juicier. The ‘typical’ user might not be using generative AI tools for local search queries right now, but if this technology is rapidly integrated into our everyday search platforms, then there might not be much choice in the near future. So, how do the results stack up?

Barring the instance where Bing Chat couldn’t correctly list takeaway pizza outlets, both SGE and Bing Chat appear to provide accurate results that you would find via traditional search methods. There are a couple of kinks to iron out, such as the accuracy or relevance of the different labels SGE assigns to businesses, while Bing Chat’s cited sources don’t always seem to correlate with the information it presents.

A Note On Traditional Bing and Google Search

While the answer to which search engine is ‘better’ generally comes down to personal preference, comparing Google and Bing side-by-side does show that Bing has been working hard on its business search results and intent matching. Referring to the table in our key findings, we can also see that Bing shows results in the form of maps, images, review ratings, and business listings 100% of the time, compared to Google showing these formats 80% of the time.

From a local marketing perspective, it’s important to remember that different search engines can allow businesses to reach new audiences and target different demographics.

Tip: If you’re looking to improve your business’s visibility on Bing, be sure to check out its webmaster guidelines to understand how it ranks content.

Learnings and Recommendations

- Considering Bing may pull your business reviews from multiple sources, it’s good practice to ensure your review campaigns are covering multiple, relevant review sites.

- The prevalence of listings websites highlights that the importance of business listings is not going away any time soon! Take the time to ensure the correct business information (your NAP) is listed across the relevant sites. Check out some of the top citation sites by industry.

- Maintaining crucial local marketing elements such as your GBP and review profile, and building a reputation through customer service and community engagement, will also strengthen your likelihood of being cited by generative AI tools.

- Varying results displayed by search engines reinforce the importance of ranking beyond Google. Check out our guide to alternative search engines for more.

Summary

The main message? ChatGPT and Bard show they can’t beat traditional search for local business discovery. But for Bing Chat and SGE, the AI-powered results that are integrated with traditional search engines, the lines are a little blurry.

It’s hard to say where AI-powered chatbots, or their adoption by mainstream users, will be in another few months. And, while SGE is not currently available to those outside of the US, the full integration between AI model and search engine is inevitable.

Overall, the integration of Bing Chat and SGE provides a better sense of familiarity to the typical user, in line with traditional search engines, compared to Bard and ChatGPT. Only time will tell whether users default to these results when they roll out as mainstream.

We hope that you found this case study both useful and enjoyable to read! What are your thoughts on the place of generative AI in local search? Let us know over on Twitter, or on our Facebook community, The Local Pack.